Welcome to the 10% Smarter newsletter. I’m Brian, a software engineer writing about tech and growing skills as an engineer.

If you’re reading this but haven’t subscribed, consider joining!

So far we’ve looked at one of the two methods of data processing, batch processing. This week we’ll look at the other method, stream processing. As a recap, stream processing is a process that consumes input, process it, and produces outputs in near-real-time. This is as opposed to batch processing, that processes job in an offline job.

Different Methods of Stream Processing

Event Streams

Events are records with a timestamp that indicates when the event happened according to a time-of-day clock. Event streams are a stream of events from a producer (publisher) to be potentially processed by multiple consumers (subscribers). Related events are grouped together in a topic or stream. The window is a set interval of time data is processed.

Three frameworks that do stream processing are Apache Flink, Spark Streaming, and Google Cloud Dataflow.

Micro-Batching And Checkpointing are two strategies to achieve fault tolerance in stream processing. Spark Streaming using micro-batching, break the stream into small batches typically around one second. If a batch fails, it can be rerun. Apache Flink, on the other hand, generates checkpoints of state and writes them to durable storage. If a stream operator crashes, it can restart from its most recent checkpoint.

Direct Messaging Publish/Subscribe Model

The Direct Messaging Publish/Subscribe Model uses direct communication between producers and subscribers. Some thing to handle when using this model are:

What happens if the producers send messages faster than the consumers can process them? The system can drop messages, buffer the messages in a queue, or apply rate limiting.

What happens if nodes crash, are any messages lost? Durability may require writing to disk and/or replication.

Some different kinds of direct messaging publish/subscribe systems are:

Using UDP multicast, where low latency is important, application-level protocols can recover lost packets.

Broker-less messaging libraries such as ZeroMQ

Webhooks, which are a pattern where a callback URL of one service is registered with another service, and the other service can makes a request to that URL of the original service whenever an event occurs.

Message Brokers

Message Brokers are also another method of sending messages from a producer to one or more consumer. A producer will send a message to a named queue or topic, and the queue broker ensures that the message is delivered to one or more consumers or subscribers to that queue or topic. Apache Kafka is the most common message queue framework.

The advantages of a message broker are that:

It can act as a buffer if the recipient is unavailable or overloaded

A message can be delivered to multiple recipients.

It decouples the sender from the recipient. The sender does not need to know anything about the recipient.

The communication pattern here is usually asynchronous - the sender does not wait for the message to be delivered, but simply sends it and forgets about it.

Message Brokers are also usually partitioned. This allows them to scale to higher throughput than a single disk can offer. A topic can then be defined as a group of partitions that all carry messages of the same type.

Handling Multiple Consumers

There are two patterns for sending messages to multiple consumers:

Load balancing: Each message is delivered to one of the consumers. The broker/queue may assign messages to consumers arbitrarily.

Fan-out: Each message is delivered to all of the consumers.

Acknowledgements and Log-Based Message Brokers

Message Brokers use acknowledgements where a client must explicitly tell the broker when it has finished processing a message, so the broker can remove it from the queue. Message Brokers that choose to have durable storage, event after client acknowledgement are called log-based message brokers.

It is easy for a log-based message broker to perform the fan-out messaging pattern as several consumers can independently read the log reading without affecting each other.

Achieve load balancing however, is harder. To achieve it, the broker can assign entire partitions to nodes in the consumer group. Each client then consumes all the messages in the partition it has been assigned. While this works, this approach has some downsides. If a single message is slow to process, it holds up the processing of subsequent messages in that partition.

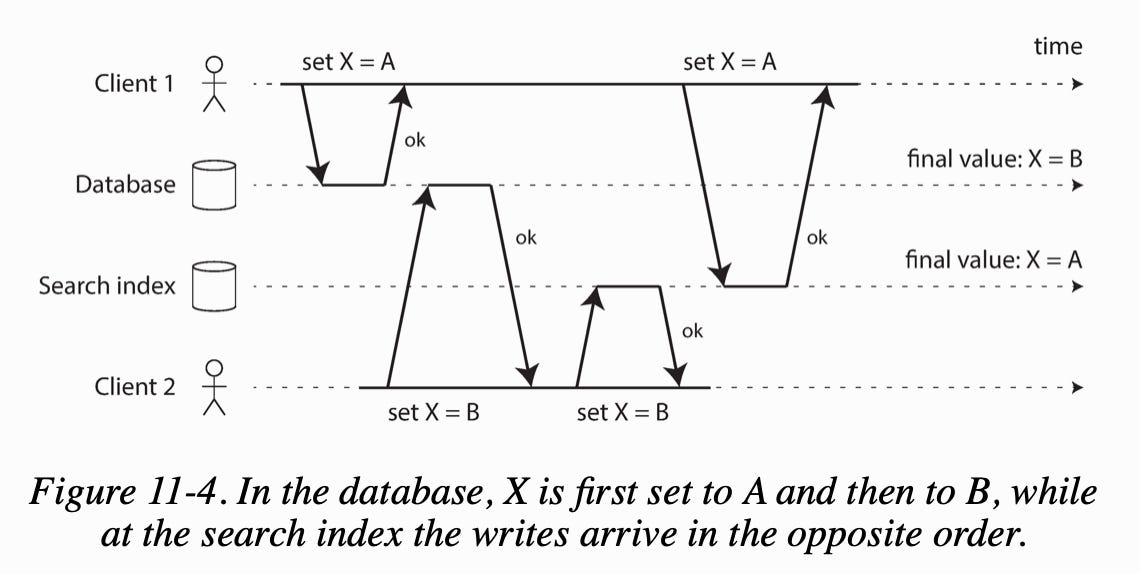

Dual Writes

If we have a system with multiple data stores requiring copies of data and periodic full database dumps are too slow, dual writes are an option we can consider. Dual writes instead write to all data stores instead of using copies from the database. For example, we can write to a database, a full text search index, and a cache at the same time.

One major issue is race conditions. Where two writers to two different stores can have timing that leads to inconsistency in the data.

Another issue is if one of the writes may fail while the other succeeds and two data stores will become inconsistent.

Change Data Capture and Event Sourcing

Change data capture (CDC) is the process of logging all data changes written to a database from the replication to be apply to other systems.

For example, you can capture the changes in a database and continually apply the same changes to a search index.

Event Sourcing is similar to change data capture is that it logs all data changes written to application state to be apply to other systems but at the application level.

This is written to an append-only log for immutable events that can be used to reconstruct the application state.

What Can You Do After Processing Streams?

After processing streams, you can:

You can take the data in the events and write it to the database, cache, search index, or similar storage system, from where it can then be queried by other clients.

You can push the events to users in some way, for example by sending email alerts or push notifications, or to a real-time dashboard.

You can process one or more input streams to produce one or more output streams.

Conclusion

This article explored stream processing looking at event streams, the direct messaging publish/subscribe model, and message brokers. Feel free to subscribe as we look at the future of data systems next week and share this article if you liked it!