The Trouble with Distributed Systems

Chapter 8 of Designing Data Intensive Applications: The Trouble with Distributed Systems

Welcome to the 10% Smarter newsletter. I’m Brian, a software engineer writing about tech and growing skills as an engineer.

If you’re reading this but haven’t subscribed, consider joining!

What makes distributed systems so hard? Distributed Systems have many failure modes and require very precise settings that are easy to mess up. They involve multiple machines and become harder to debug. Let’s look at some of the problems that can arise when dealing with distributed systems.

Communication Between Shared-Nothing Systems

Most Distributed Systems are Shared-Nothing Systems. These are systems where machines communicate only through the network and have their own memory and disk. They are unable to access other machines' memory or disk.

These machines require no special hardware and can use cloud computing services. They achieve high reliability through redundancy across multiple distributed datacenters.

Messages are sent through the internet and internal networks in asynchronous packet networks. Messages send have no guarantees when it will arrive or if it will arrive. The request or response may be lost in the network, stuck in a queue, or a remote node may have failed. We handle this with a timeout, after some time, give up waiting.

Packets can be lost, reordered, duplicated, or delayed in a network. To deal with this, we use Transmission Control Protocol (TCP). TCP provides a reliable layer on top of an unreliable IP network layer. It re-transmits missing packets, eliminates duplicates, and reassembles packets in the right order.

The Trouble With Clocks

In addition to network packet issues, another unfortunate problem we face when working with multiple machines is that clocks are unreliable. Every machine in a network has its own clock, which is unreliable and may be slightly faster or slower than all other machines. Network Time Protocol (NTP) is one way to sync clocks using a GPS receiver.

There are two types of clocks computers use:

Time-of-day clocks (also known as wall-clock time) are like standard clocks and give the current date and time according to a calendar. They are synchronized with NTP but may be forced to jump back to a previous point in time if they are too far ahead. This makes them unsuitable for measuring elapsed time.

Monotonic clocks are guaranteed to always move forward and can give elapsed time.

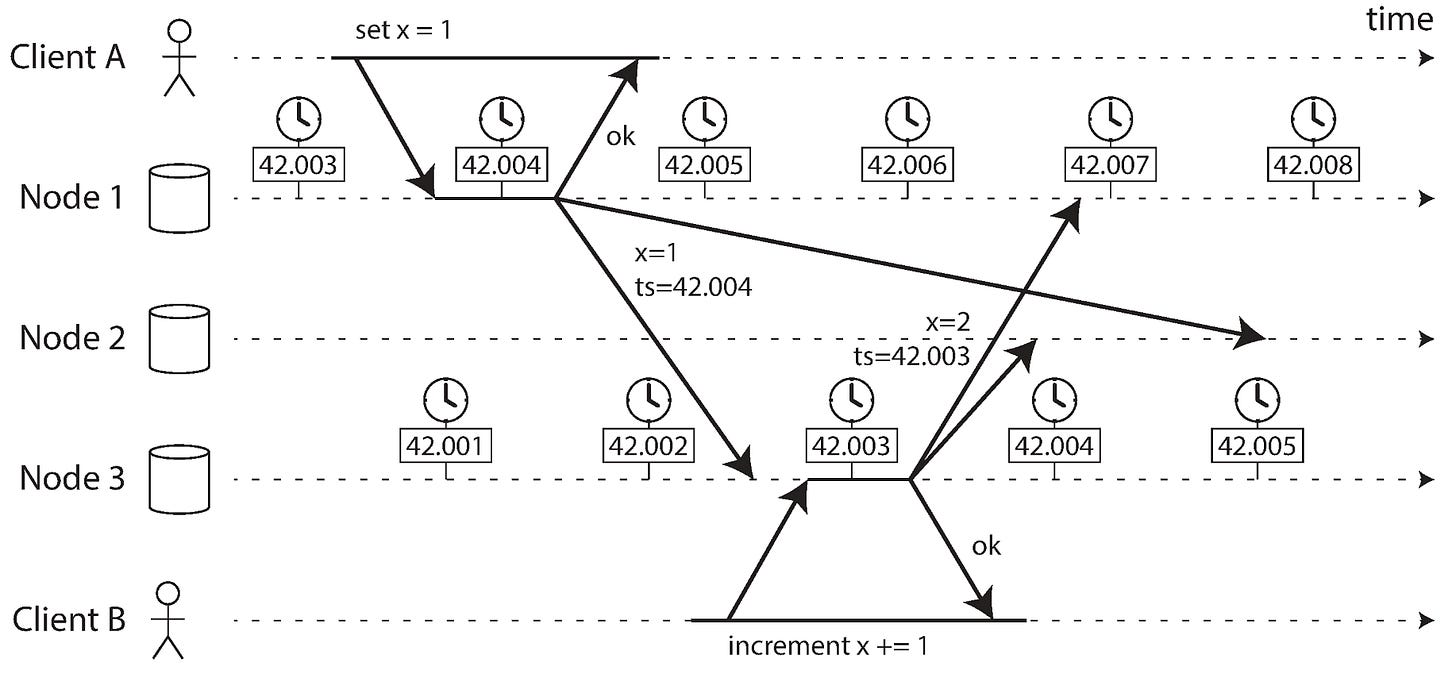

It is dangerous to rely on clocks for ordering of events across multiple nodes. One example is when using last write wins such as in Cassandra, this may lead to data loss.

For example, Node 1's clock is behind Node 3's clock. This means Client A's write is ordered incorrectly behind Client B and could be dropped in last write wins.

Leap seconds are also tricky to deal with. They will lead to a minute that is 59 seconds or 61 seconds long. Many systems were not designed with leap seconds in mind, and have lead to crashes as a result.

Logical Clocks

Logical clocks are based on counters instead of physical clocks that use oscillating quartz crystal, are safer alternative for ordering events. They only measure relative ordering of events.

Spanner

Spanner is a database created by Google that implements snapshot isolation across datacenters by using multiple clocks’ confidence interval. If you have two confidence intervals where

A = [A earliest, A latest], B = [B earliest, B latest] And those two intervals do not overlap (A earliest < A latest < B earliest < B latest), then B definitively happened after A.

Spanner deliberately waits for the length of the confidence interval (approximately 7ms) before committing a read-write transaction, so their confidence intervals do not overlap.

To keep the clock uncertainty as small as possible, Google deploys a GPS receiver or atomic clock in each datacenter.

Leader Leases

A node can known it is a leader by obtaining a lease from other nodes. The node is leader until the lease expires and the node must periodically renew the lease. If the node fails, another node can apply for a lease.

The lease timeout must be careful to handle timing issues such as

Garbage collectors which stop all running threads

Virtual machine can be suspended

Pausing threads due to slow synchronous IO operation

Due to unreliable clocks, a node cannot trust its own judgement. Many distributed systems rely on a quorum, which is a vote among the nodes where an absolute majority agree on a state.

Lock Services and Fencing Tokens

It's common to use a Lock Service like ZooKeeper to grant locks on writing to storage service. Every time the lock service grants a lock, it also returns a fencing token which monotonically increases every time a lock is granted. Every time a client writes to a storage service, it includes its current fencing token. The storage service can reject writes from old tokens avoid risks such as garbage collection pauses.

Byzantine Faults and Byzantine Fault Tolerant Systems

Fencing tokens can help block a node in error. If nodes may lie such as using fake fencing tokens, this a much harder problem to deal with. That behavior is known as a Byzantine Fault and systems that are designed to handle these faults are Byzantine Fault Tolerant Systems.

Conclusion

This covers some problems that we face, such as unreliable network and unreliable clocks when designing distributed systems. Stay tuned as we look at strategies to deal with theses issue and how distributed systems can deal with them in practice. Feel free to share this article if you liked it!